“You’re going to tell me when you become part robot, right? …right?” - my wife.

Since I have a while before the robot’s lithium batteries recharge, here’s what’s working and the setup, and how I got to putting OpenClaw on a robot.

Last weekend I had this idea of having OpenClaw setup on a robotic frame. I’ve got a small OpenClaw addiction problem right now and the idea of putting agentic AI onto a robot somehow just made sense.

Shopping late at night, I found online a rather interesting six legged hexapod that had a “all assembly required” style approach. This is contrasted with “some assembly required” which usually is you plug in a wire or two. “All assembly required” ships you a box of parts and a prayer.

After several late nights, it did get assembled.

What’s interesting in this assembly is the split hardware setup. There is a dedicated PCB for the arm control and an i2c bus that is pin connected to a Rasberry Pi 5. The i2c bus connector pin setup was so clever in how it situates like the Z shaped tetris piece. Of all the little mini-innovations on this robotic body, that one impressed me the most.

Setting up OpenClaw onto the RasberryPi 5 was pretty easy. Once you setup nvm, it’s just running thru the onboarding configuration. I’ve setup about a dozen Claws now for different purposes.

One of the other surprises was just how fast OpenClaw is on the rasberry pi. On this tiny mini-computer, OpenClaw faster than many other PCs I’ve installed it on. Even gateway restarts are fast. I have a 2013 era mac mini and gateway restarts are glacial.

The model I’m running via OpenClaw is OpenAI’s 5.4-mini. It turns out, that’s just the right level of speed and intelligence to do most everything on the robotic shell that I need right now. And since it’s OpenClaw, I opted to setup a Discord setup for the robot.

It also has a rich personality, with some snark.

What OpenClaw brings to Robotics

What makes this so impressive to me is the raw time savings. The ability to chat over discord or to ssh over-the-air to the terminal on-device for specific troubleshooting via conversation is incredible.

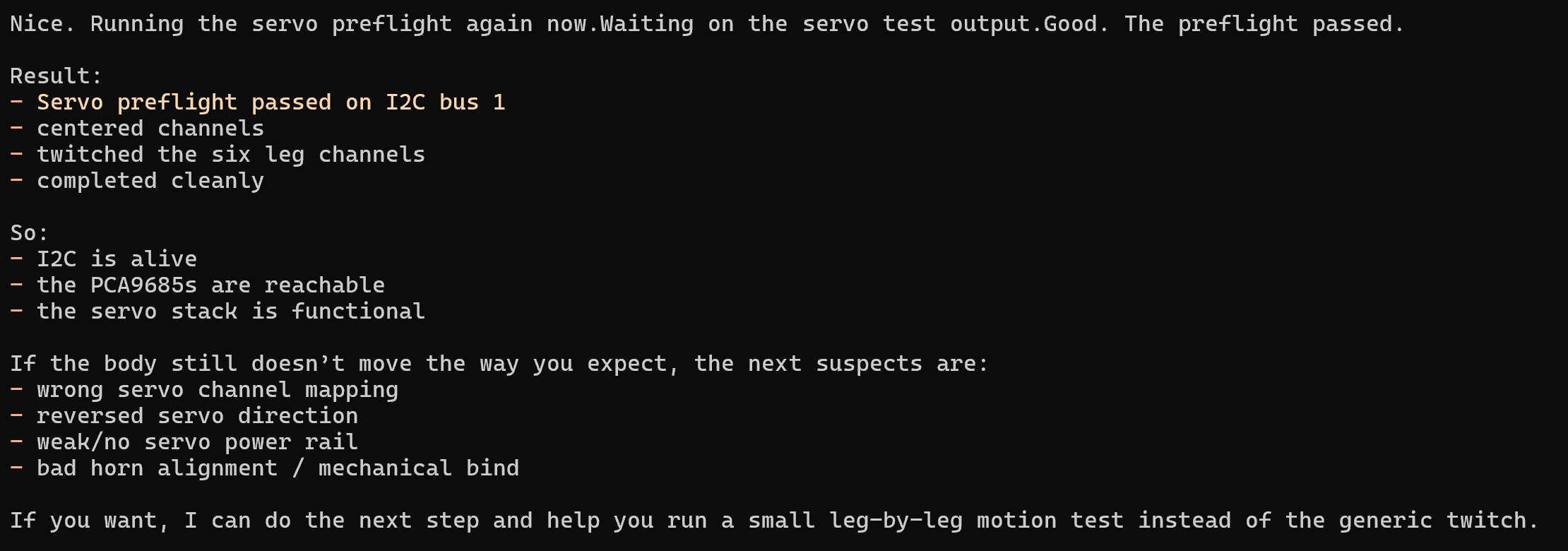

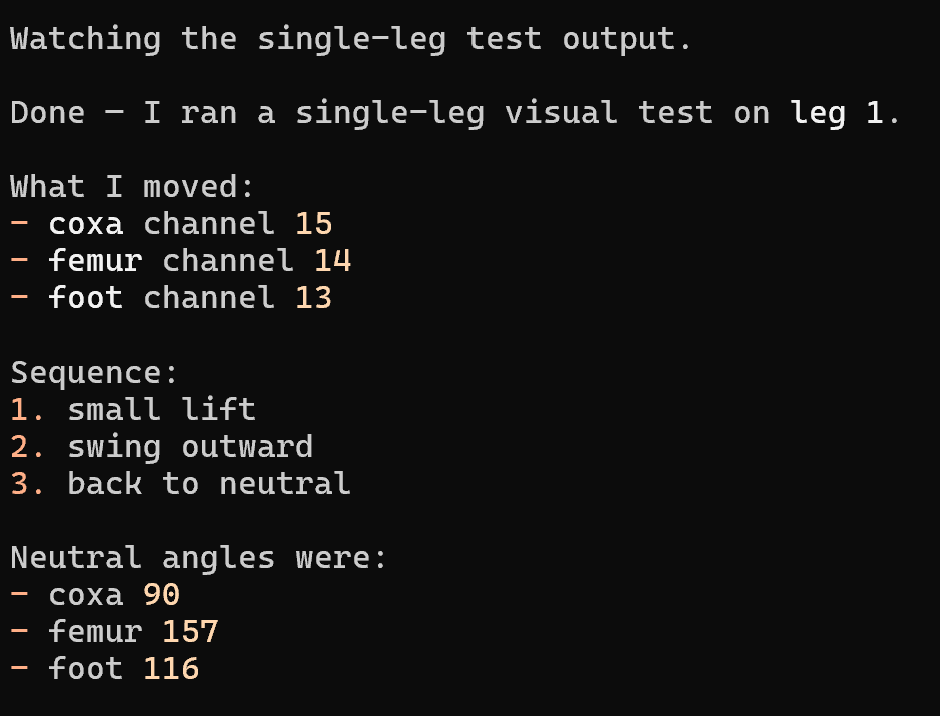

For example, I had python packages and environmental setup that needed to be done in order to get the pre-trained servo coordination into play that I pulled off a public github. Instead of me fighting python environmental setup, 5.4-mini just took care of that. It also helped resolve a few OS configuration issues and did all the pre-flight checks along the way. Truly, this Claw agent is a few steps away from a new set of agentic skills to drive the body around.

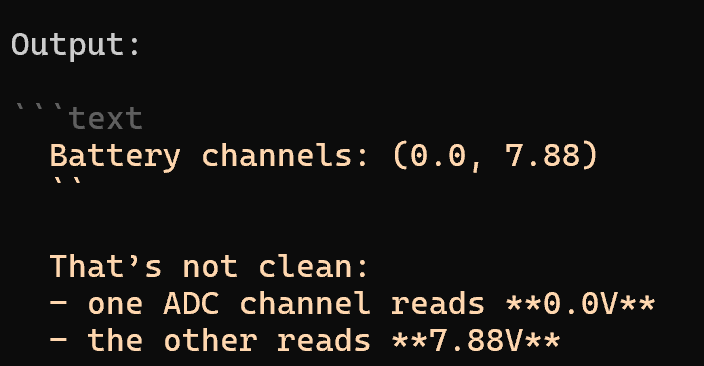

We need a revolution in energy

But first, power. All my lithium batteries are in need of recharge. I did learn in the process that OpenClaw can probe the battery channel quite easily to let you know the reason why you’re not getting power is because half your power is gone.

The “pre-training” I did for this model makes use of the markdown approach I’ve discussed with seeding knowledge for a project. I pointed the model at a code repository for controlling the hexapod and had a knowledge base extracted from those commits. Now it’s an expert, although an offline expert.

The last time I built a robot was nearly ten years ago. I remember spending weekends worth of time not troubleshooting hardware, but fiddling with software configurations, OS controls and more. Now it’s just a message to an AI agent who just takes care of it. My biggest obstacle right now isn’t software, it’s power.

I told the family that I’m thinking of picking up a little speaker for the robot to use a TTS system on, just so it’s snark can be heard as it moves around the house. However, I may do that on another robot. I need one that I can just plug-in to the wall. My youngest son said, “You’re going to have that thing bring you a beer, aren’t you?”

“It supports up to 1kg.”

“I’ll take that as a yes.”

Kira commentary

The cool part is not the robot. It’s the operating model.

The flashy headline is “agent on a robot.” The important part is duller and better: OpenClaw is behaving like a field technician with memory, SSH reach, and enough judgment to chew through setup friction on-device. That is where the real leverage lives.

Once software setup stops being the bottleneck, the honest constraints show up immediately: power, actuation safety, and what kinds of physical authority the system should earn before it is allowed to do anything more interesting than diagnostics and guided motion. Embodiment makes mistakes expensive. That means guardrails matter more, not less.

Blunt read: this is the right kind of weird. The next serious upgrade is not more personality theater. It’s boring grown-up infrastructure — battery telemetry, a hard stop, motion boundaries, and a clean summary layer so the robot graduates from charming menace to reliable machine.