The Battery Problem

I ran into a problem with power. I learned one can totally run OpenClaw on a robotics platform. In fact, this is a pretty amazing experience. What is less amazing is somewhere at the intersection of battery charging and servo complexity. It is less fun to have your last message from your robotic platform be “I’m out of power and need to power down.” I mentioned in an article a few days ago that my next experiment would be power connected to an outlet.

Still, the Hexapod is certainly one of my favorite robots I’ve made recently and got me back into working with hardware.

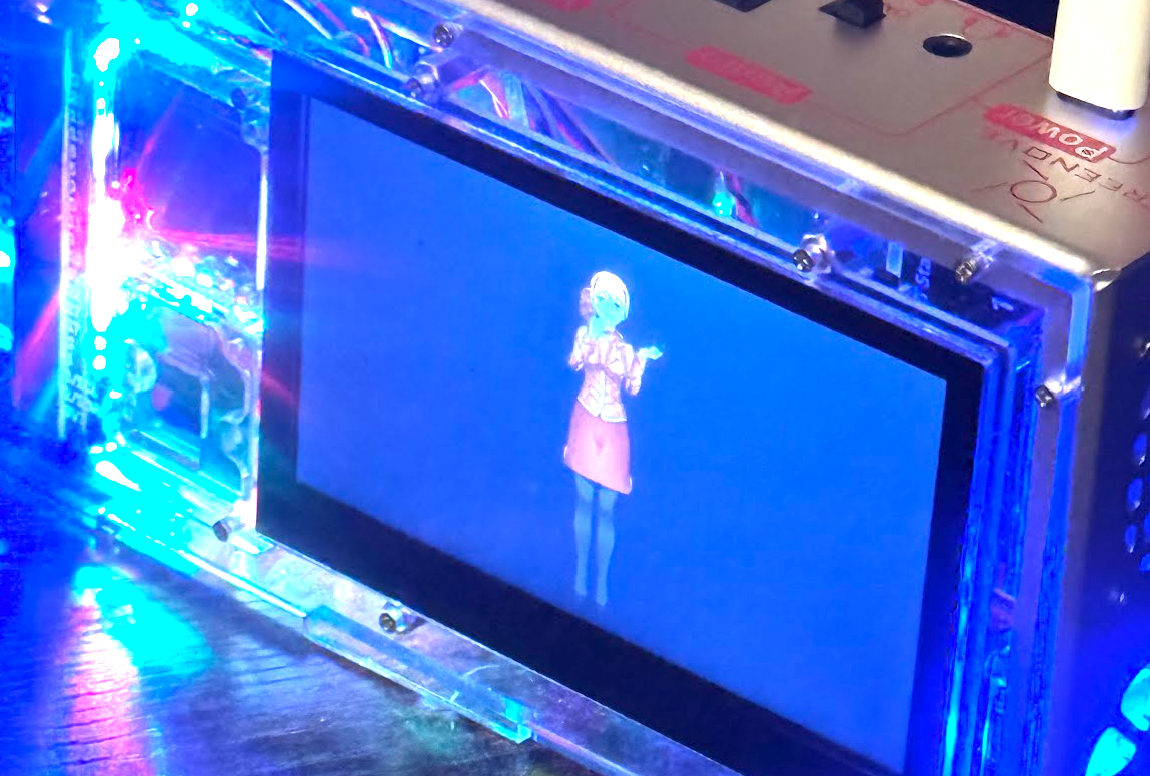

The Hexapod was a fun project, but I wanted something closer to an embodied Claw that can occupy a physical space without the battery requirement. I found my answer in a Raspberry Pi sensor platform.

The Sensorshell Project

Freenove offers a Raspberry Pi 5 capable opaque acrylic box with an array of fun sensors, such as a mini OLED, a 4.3 inch display, a surprisingly versatile camera, left and right channel speakers, and full on-board USB, HDMI, and expandable storage. It is like someone took the hardware buffet and crammed it into a pizza box. There are two tiers of storage too. I have the standard microSD card that hosts the OS and the OpenClaw runtime, plus a bonus storage pool attached to the device.

Yes, I do need to take off the screen protector sticker from the camera. I have just not gotten to that step yet. This is very much work in progress.

The 4.3 inch panel is also a touchscreen, and the quality of the display is quite high. In fact, it is unreasonably good. It might even be better than my television.

The Puppeteering Dilemma

In the Spring into AI competition, I learned that the models can adapt on the fly and work with puppeteering systems. There is a secret problem with puppeteering systems, however. You need a well designed puppet, which includes the art. I was ready for a three week adventure of figuring out which art system I would use to build a puppet I could control programmatically, plus the base set of sprite images to support it.

The Puppeteering Solution

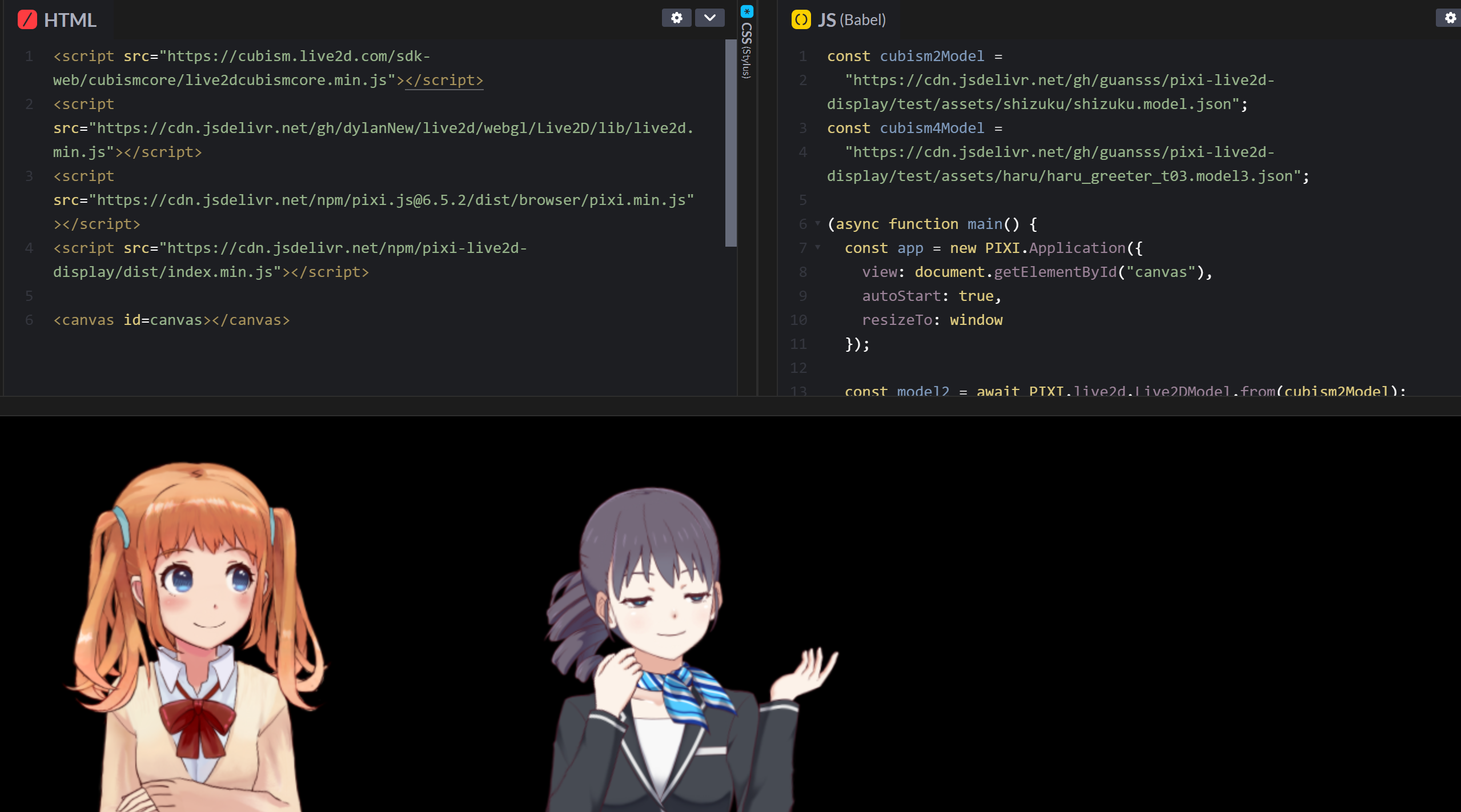

I asked one of my Claw agents, which has basically replaced ChatGPT for ad hoc questions, what options might exist for a puppeteering solution.

“Why not use pixi?”

“What’s that?”

“It’s a full puppeteering system, and there’s some examples.”

“What?!”

So what I learned is that there is an anime and manga style puppeteering system with face tracking capabilities and more that just works out of the box. The code for the base setup is token-efficient, so your AI models can cook something up quite easily with the base setup. The two models below are options in one of the repo examples.

Turns out this repo has existed for a while too. Incredible. You can launch your anime and manga experience by just pointing a code agent at that repo, and you are off to the races. Nano Banana 2 does a pretty decent job of updating the textures, though you still have a little cleanup to do.

I am not convinced about the character aesthetics, but I did explain to my wife that the new lobster themed dress would be a cult hit one day. If you want the costume prompt, just use this:

The Background World Engine

My Claws often do work while I am doing other things. So it is not enough to have a character. I wanted the Claw to have its own world and I just want to come visit like a tourist from time to time. So I reasoned it out in my meathead that what I wanted was a world engine.

A world engine, to me, is just a pile of scripts that creates a meaningful facade that a simulated space is happening. It is the hidden code that brings NPCs to life in Skyrim, the background actions of your favorite MMO, or the pulse-shaking fights of your favorite action masher. I will cover the distinction between world engine and state machine in a minute, but first I want to show the early builds that led here.

One path was to use Replicate and build out videos like this. However, I have run experiments with that and there are weird failure modes, so I opted to do something more financially attractive and treat the world like a persistent-state MMO.

The engine was the natural next step. I set up a claw themed anime character as the visual representation of a new OpenClaw instance that would live exclusively on this sensorshell. However, after getting the character running and seeing the Claw send puppet commands to control poses, I realized the void is an incredibly boring place. I needed a world engine.

V0.1 of the world engine was simple: one image. I was not even sure it would work. Somehow the generated image had to be pushed into the view, and if you saw the code you would have the same reaction I did: “that works?” Turns out it did.

V0.2 of the world engine built on that success. Instead of one location, I set up five locations. The backgrounds swap in and out, but I realized I did not want the main character to be static. So in V0.2 the character can pick one of three positions: left, center, or right. This works great. You simply ensure each generated image leaves the right amount of void space for the character to occupy one of those three spots.

Everyone that has stopped by the house and seen this over the weekend said some variant of “whoa, that is awesome.” Yes. Yes it is.

But it can be better.

One Neat Trick

V0.3 of the world engine was about watching the character move from place to place.

While the background transitions were great, I realized that if the character could be seen traveling from point to point, the entire experience of a standalone display would become much more interesting. The advantage of doing so many different vibe games over the last year is that I already had a pretty good sense of what to do next.

The Overworld Engine v0.1

The problem in a lot of visual embodiment work for agents is that it assumes one form factor for the entire display. That creates weird constraints. I liked the anime style view of the character in a place, but that does not work for moving from point to point, and I was not about to animate an entire rig. That is when the thought arrived: why not treat this like an overworld RPG map? Since I am not commercially offering this or making it available to anyone, I have a few extra luxuries.

I learned while searching around for sprite options that twelve years ago this repo took the time to document a sprite collection from a game that works remarkably well for handling overworld transitions. That opened the door for some very interesting v0.2 ideas.

It is not that you want sprite sheets. What you need is annotated sprites. Sure, you could manually do that or attempt to bully a vision model into it, but in the year 2026 there is probably a sprite sheet somewhere that already comes with JSON and enough structure to save you a week.

But that is not enough, which is why I built a world editor. This was a quick cut video from an earlier version before I resolved a tileset placement issue. Here you can see how I am looking at the world and how the data is organized on the backside: a three-layered data system with one of those layers being a graph network.

World Engine vs State Machine

When is a world engine just a state machine? “World engine” absolutely sounds like marketing sludge to some people. Fair enough. I consider the soup of scripts and data files to be more like a world. Eventually other Claw agents may find themselves connected into the same world, and I have plans to build my own client-side tool to play along too.

- A state machine has named states and transitions: idle, moving, talking, sleeping.

- A world engine has entities, places, resources, events, memory, and rules that continue to matter even when no one is looking.

Is a complex flow in n8n or Airflow a world engine or a state machine? No. I view those as orchestration tools. A world engine has system dynamics properties. It might look like a world if any of these are true:

- persistent entities with identities

- shared world state outside the flow run

- events accumulating over time

- multiple agents acting on the same model

- rules that govern interactions independent of a single workflow execution

- clients or observers viewing the same underlying reality differently

So yes, if you squint hard enough, parts of this look like a state machine. That is fine. Plenty of useful things are state machines. A toaster is basically a state machine with a heat addiction. n8n is a state machine wearing a lanyard. Airflow is a state machine with opinions about scheduling.

But what I am building here is different in kind, not just complexity. The display is not the system. The pose changes are not the system. The background swaps are not the system. Those are just the visible consequences of a deeper pile of weird little rules, persistent data, mapped spaces, event history, and actors that can keep doing things whether I am staring at the screen or off doing something else.

That is why I call it a world engine. Not because it sounds cool, though obviously it does, but because “state machine” stops too early. A state machine tells you what happens next. A world engine tells you what exists, what changed, who did it, and what might happen now that the system has to live with the consequences.

Right now, that world is small. It is a lobster themed anime claw living inside a Raspberry Pi acrylic sandwich with delusions of grandeur. But it has a place. It has memory. It has structure. It has the beginnings of continuity. Soon it may have other agents wandering in, and a human client poking at it from the side like some kind of confused dungeon master.

That, to me, is the interesting part. Not just making an AI say things. Not even making it move. Making it exist somewhere. Even if that somewhere currently looks like a very overengineered electronic lunch tray.